It's that time of year again when Jensen Huang, Founder and CEO of NVIDIA, amazes us with a recap of what NVIDIA has accomplished over the past year, and dazzles us with what we can expect in the year to come. Sporting his iconic leather jacket, Jensen kicked off his keynote presentation in style preparing us all with an overview of what to expect in the coming hour and a half presentation. Use the link above to view the keynote presentation in its entirety, but below I'm going to hit on a couple of impressive highlights that stuck with me this time.

RTX & Omniverse

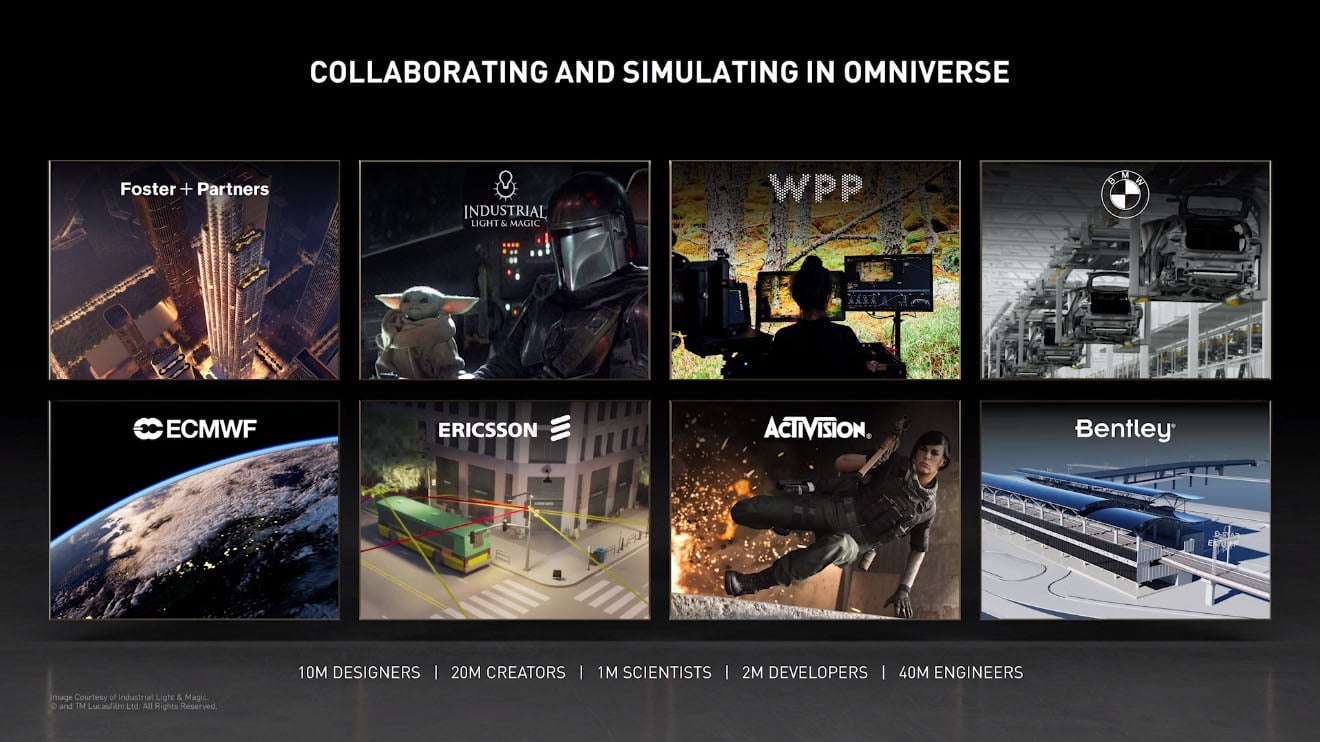

Just what I expected, this GTC was a "tick" in the standard tick-tick methodology that NVIDIA follows with its GPU Technology Conferences. The RTX introduction in the keynote was really just a springboard for Jensen to use to talk about the ever-impressive NVIDIA Omniverse technology. Omniverse is a very powerful multi-GPU real-time simulation and collaboration platform. This technology, along with NVIDIA Isaac for Robotics, showed us just exactly what you can do with Digital Twins. The most impressive piece was watching Dr. Milan Nedeljkovic from BMW's AG Production show how BMW is using Omniverse in their automobile production plants. It was stunning.

Honestly, it showed a huge breakthrough and was key to showing exactly how you would expect technology of this magnitude to be used. The list of companies involved and participating with Omniverse was impressive and includes our sister company BOXX Technologies for their work in developing and building purpose-built, high-end NVIDIA Certified Workstations for use with Omniverse.

Data Center - The New Unit of Computing

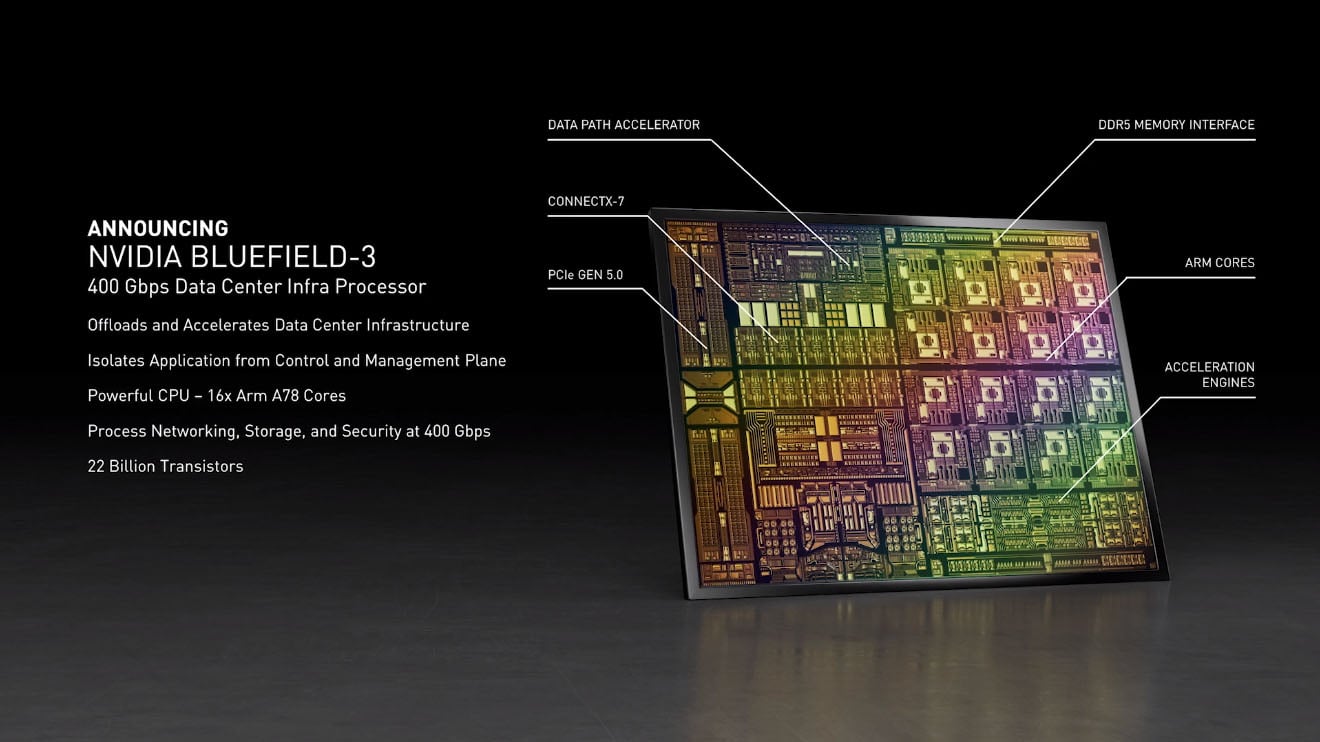

Jensen begins by echoing something that we've been saying for years now. Cloud computing and Artificial Intelligence are driving fundamental changes in how data centers are being architected. Due to the sheer power consumption of the latest and greatest that AI intelligence card manufacturers are developing, data centers are re-engineering to fit the power and cooling models needed to compete and support these cards. But it's more than that now. Moving from a monolithic model past software-defined data centers, disaggregated scale-out infrastructure, and through GPU-accelerated computing, we've now arrived at DPU-Accelerated Infrastructure. Enter NVIDIA® BlueField® DPUs. By offloading, accelerating, and isolating a broad range of advanced networking, storage, and security services, BlueField DPUs provide a secure and accelerated infrastructure for any workload in any environment. BlueField DPUs combine powerful computing, full datacenter-on-a-chip (DOCA) programmability and high-performance networking for addressing the most demanding workloads.

With this, and the introduction of BlueField-3, Jensen also introduced DOCA 1.0 SDK for Bluefield DPUs, support for hyperscale, enterprise, supercomputing, and hyper-converged infrastructure, as well as a full-blown BlueField Partner Ecosystem. Jensen even shared that BlueField-4 is due out in another 18 months and will provide 10x the performance of BlueField-3.

DGX, DGX, DGX

Yes, more updates to the DGX line are here. I won't spend too much time recapping this section as the updates were mainly adding the latest Ampere processors (NVIDIA A100 GPUs) to the already beefy NVIDIA DGX Station, now with 4 NVIDIA A100 80GB GPUs, and the DGX SuperPOD which now supports NVIDIA A100 80GB GPUs as well, plus BlueField-2 and NVIDIA's BaseCommand management and orchestration tool. The DGX SuperPOD starts at $7,000,000 for those interested.

Likely the coolest part of this piece of the keynote presentation was Jensen talking about how NAVER (in Korea) has been using the NVIDIA SuperPOD to create language understanding AI services. He also mentioned NVIDIA Clara and its expansion to introduce 4 new models now that help with genomics and drug discovery. Jensen also talks about how Recursion is using their DGX SuperPOD (aka BioHive-1) to generate, analyze, and derive insight from biological and chemical datasets. These use cases are by far some of the most impressive things to hear about in the field of AI due to the recent events of COVID, but also because so much data exists that needs to be utilized.

The Unveiling of Grace™

NVIDIA Grace is the company's first data center CPU, an Arm-based processor that will deliver 10x the performance of today’s fastest servers on the most complex AI and high-performance computing workloads. NVIDIA Grace is a highly specialized processor targeting workloads such as training next-generation NLP models that have more than 1 trillion parameters. When tightly coupled with NVIDIA GPUs, a Grace CPU-based system will deliver 10x faster performance than today’s state-of-the-art NVIDIA DGX™-based systems, which run on x86 CPUs.

Under the hood of Grace’s performance is 4th-generation NVIDIA NVLink interconnect technology, which provides a record 900 GB/s connection between Grace and NVIDIA GPUs to enable 30x higher aggregate bandwidth compared to today’s leading servers. Grace will also utilize an innovative LPDDR5x memory subsystem that will deliver twice the bandwidth and 10x better energy efficiency compared with DDR4 memory. The new architecture provides unified cache coherence with a single memory address space, combining system and HBM GPU memory to simplify programmability.

Under the hood of Grace’s performance is 4th-generation NVIDIA NVLink interconnect technology, which provides a record 900 GB/s connection between Grace and NVIDIA GPUs to enable 30x higher aggregate bandwidth compared to today’s leading servers. Grace will also utilize an innovative LPDDR5x memory subsystem that will deliver twice the bandwidth and 10x better energy efficiency compared with DDR4 memory. The new architecture provides unified cache coherence with a single memory address space, combining system and HBM GPU memory to simplify programmability.

Sounds great... but Grace's availability will be at the beginning of 2023, so we may see some changes before the product is fully released for availability.

NVIDIA EGX Enterprise Platform and NVIDIA Aerial A100

Jensen took time to go over the EGX Enterprise platform mainly because now all of the NVIDIA optimizations for compute and data transfer are now able to be plumbed through the VMware stack so AI workloads can be distributed to multiple systems and achieve bare-metal performance. Utilizing BlueField, the VMware stack is also offloaded and accelerated so that everything just performs better. Think of all the possibilities now.

Also discussed was NVIDIA's new AI-on-5G platform called Aerial A100. The AI-on-5G platform leverages the NVIDIA Aerial SDK (released in 2019) with the NVIDIA BlueField®-2 A100. NVIDIA has created a converged card that combines GPUs and DPUs. This creates an optimal platform to manage precision manufacturing robots, automated guided vehicles, drones, wireless cameras, self-checkout aisles, and hundreds of other ground-breaking projects.

That's Not All...

NVIDIA announced many others items that I just don't have time to go over in full detail here. State of the Art software SDKs like NVIDIA Maxine, updates to DRIVE, the Hyperion 8 AV Platform, and even the ORIN Central Computer. I encourage you to watch the NVIDIA GTC 2021 Keynote Presentation when you get a chance to see everything NVIDIA is releasing this year.

Drop us a comment below to tell us what your favorite part of the keynote was.